ETL vs ELT: Modern Data Pipeline Architecture

ETL vs. ELT: Modern Data Pipeline Architectures

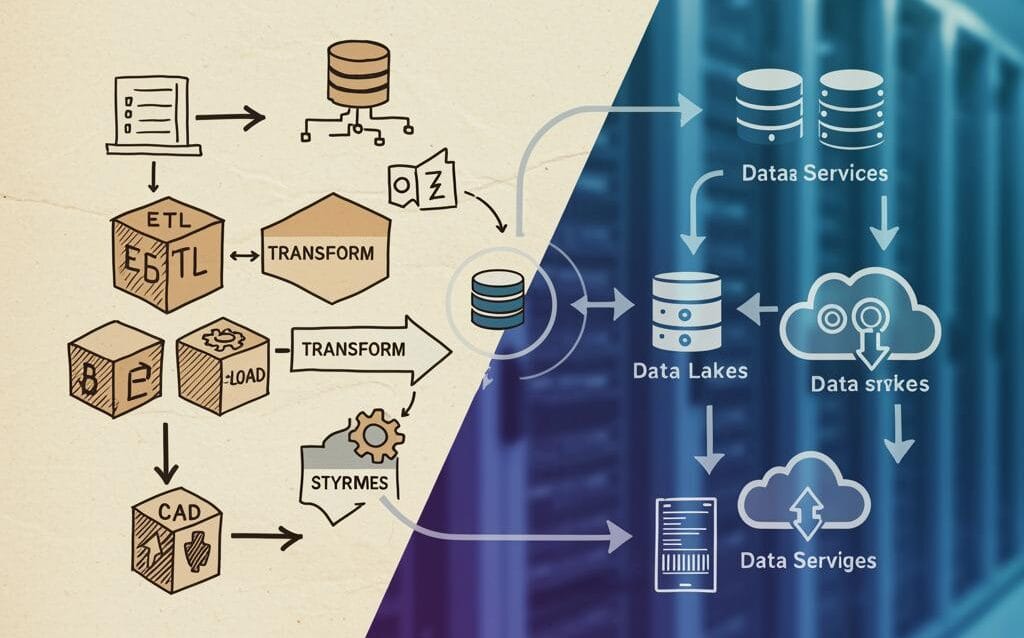

In the ever-evolving landscape of data management, businesses are constantly seeking efficient and scalable ways to extract, transform, and load their data for analysis and decision-making. Two prominent approaches to building data pipelines are ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform). While both achieve the same ultimate goal, they differ significantly in their architecture and execution, impacting performance, scalability, and overall cost. This blog post delves into the nuances of ETL and ELT, highlighting their key differences, advantages, and disadvantages to help you choose the right architecture for your data needs.

Understanding ETL: The Traditional Approach

What is ETL?

ETL, or Extract, Transform, Load, is a traditional data integration process that involves extracting data from various source systems, transforming it into a consistent format, and then loading it into a data warehouse or data mart. The transformation step, which includes cleaning, validating, and aggregating data, is performed before loading it into the target system.

How ETL Works:

- Extraction: Data is extracted from diverse sources, such as databases, applications, and flat files.

- Transformation: This is the core of ETL. Data is cleansed, transformed, and validated according to business rules. This can involve data type conversions, data cleansing, joining tables, aggregating data, and applying business logic.

- Loading: The transformed data is loaded into the target data warehouse or data mart.

Advantages of ETL:

- Data Quality and Consistency: Transformations ensure data quality and consistency before loading, leading to reliable analytics.

- Security: Sensitive data can be masked or anonymized during the transformation process, protecting it from unauthorized access in the target system.

- Legacy Systems Compatibility: ETL is well-suited for integrating data from legacy systems that may not be compatible with modern data platforms.

Disadvantages of ETL:

- Resource Intensive: The transformation step can be resource-intensive, requiring powerful servers and specialized ETL tools.

- Bottlenecks: The transformation process can become a bottleneck, slowing down the overall data pipeline.

- Limited Scalability: Scaling ETL infrastructure can be complex and expensive, especially when dealing with large volumes of data.

Exploring ELT: The Modern Paradigm

What is ELT?

ELT, or Extract, Load, Transform, is a modern data integration process that reverses the order of transformation and loading. Data is extracted from various source systems, loaded directly into a data warehouse or data lake, and then transformed within the target system. This approach leverages the processing power and scalability of modern data platforms, such as cloud data warehouses.

How ELT Works:

- Extraction: Data is extracted from diverse sources, similar to ETL.

- Loading: Data is loaded directly into the target data warehouse or data lake in its raw format.

- Transformation: Data is transformed within the target system using its native processing capabilities, such as SQL or distributed computing frameworks like Spark.

Advantages of ELT:

- Scalability and Performance: ELT leverages the scalability and processing power of modern data platforms, enabling faster data processing and analysis.

- Flexibility: Raw data is stored in the target system, allowing for greater flexibility in data analysis and exploration. Different transformations can be applied to the same data without requiring re-extraction.

- Cost-Effectiveness: ELT can be more cost-effective than ETL, especially when using cloud-based data warehouses that offer pay-as-you-go pricing.

- Faster Time to Insights: Loading data first allows analysts to access raw data quickly, enabling faster prototyping and experimentation.

Disadvantages of ELT:

- Data Governance Challenges: Storing raw data in the target system requires robust data governance policies to ensure data quality and compliance.

- Security Concerns: Sensitive data may be exposed in its raw format if not properly secured during the loading and transformation process.

- Target System Dependency: ELT relies heavily on the processing capabilities of the target system. If the target system is not powerful enough, the transformation process can be slow.

Choosing the Right Architecture: ETL or ELT?

The choice between ETL and ELT depends on several factors, including:

- Data Volume and Velocity: For large volumes of data and high data velocity, ELT is generally a better choice due to its scalability and performance.

- Data Complexity: If the data requires complex transformations, ETL may be more suitable, especially if the target system has limited processing capabilities.

- Data Quality Requirements: If data quality is paramount, ETL can ensure data consistency and accuracy before loading.

- Target System Capabilities: If the target system is a powerful data warehouse or data lake with robust processing capabilities, ELT is a viable option.

- Security and Compliance: Consider the security and compliance requirements of the data. ETL can provide better control over data security during the transformation process.

- Cost: Evaluate the cost of infrastructure, software, and expertise for both ETL and ELT. Cloud-based ELT solutions can be more cost-effective for large datasets.

In practice, many organizations adopt a hybrid approach, combining ETL and ELT to leverage the strengths of both architectures. For example, they might use ETL to cleanse and transform data from legacy systems before loading it into a data lake, and then use ELT to perform further transformations within the data lake for specific analytical purposes.

Real-World Examples

ETL Example: A retail company uses ETL to extract sales data from point-of-sale systems, customer data from CRM, and inventory data from warehouse management systems. The data is transformed to standardize formats, calculate sales metrics (e.g., total revenue, average order value), and then loaded into a data warehouse for reporting and analysis. The transformation step is crucial for ensuring that all data is consistent and accurate before it’s used for decision-making.

ELT Example: A financial services company uses ELT to analyze customer transaction data. Raw transaction data is loaded directly into a cloud data warehouse like Snowflake or BigQuery. Data scientists then use SQL or Spark to perform complex analysis, such as fraud detection, risk assessment, and customer segmentation. The advantage here is the ability to quickly analyze the raw data and experiment with different analytical models without having to pre-define all the transformations.

Conclusion

ETL and ELT are both valuable data integration architectures, each with its own strengths and weaknesses. ETL is a well-established approach that prioritizes data quality and security, while ELT leverages the power and scalability of modern data platforms. By carefully considering your data volume, complexity, quality requirements, and target system capabilities, you can choose the architecture that best suits your needs and enables you to unlock the full potential of your data.